AI Grading that cites its work.

AutoGrader combines large language models with human oversight to deliver transparent, evidence-anchored assessment at scale — every score backed by a quote from the student's own submission.

AutoGrader combines large language models with human oversight to deliver transparent, evidence-anchored assessment at scale — every score backed by a quote from the student's own submission.

AutoGrader handles the full complexity of higher-education assessment — from OCR to policy learning — so instructors can focus on teaching.

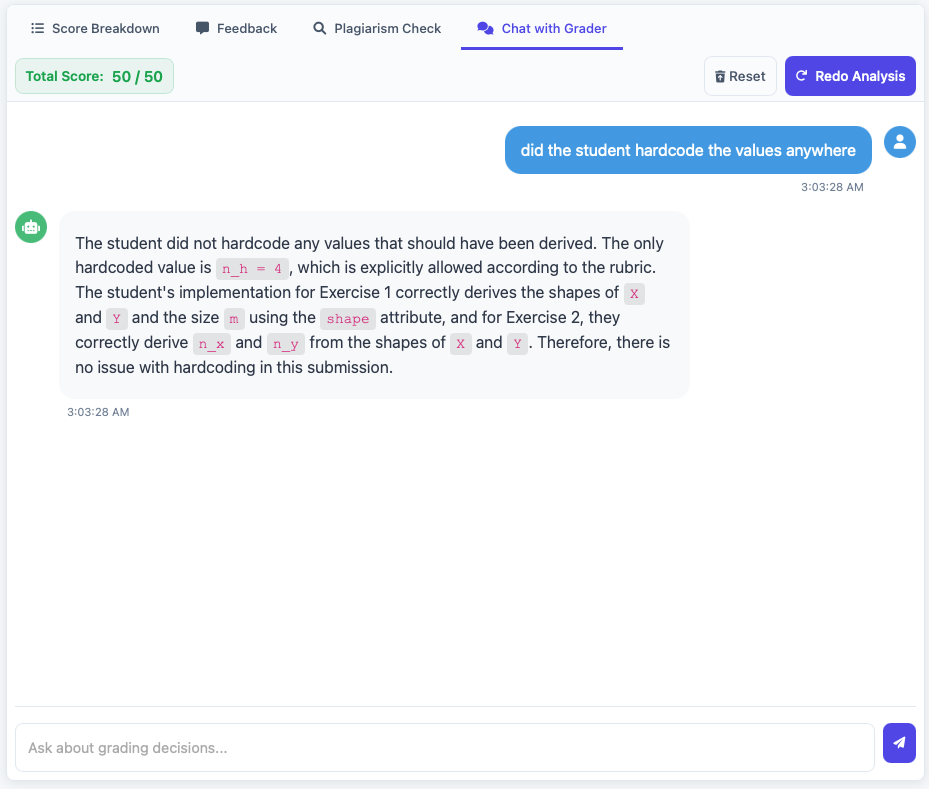

Every point awarded or deducted is backed by an exact quoted passage from the student's submission — no black-box decisions, full auditability.

When TAs override AI scores, the system learns generalizable IF/THEN grading rules that automatically apply to all future submissions in that course.

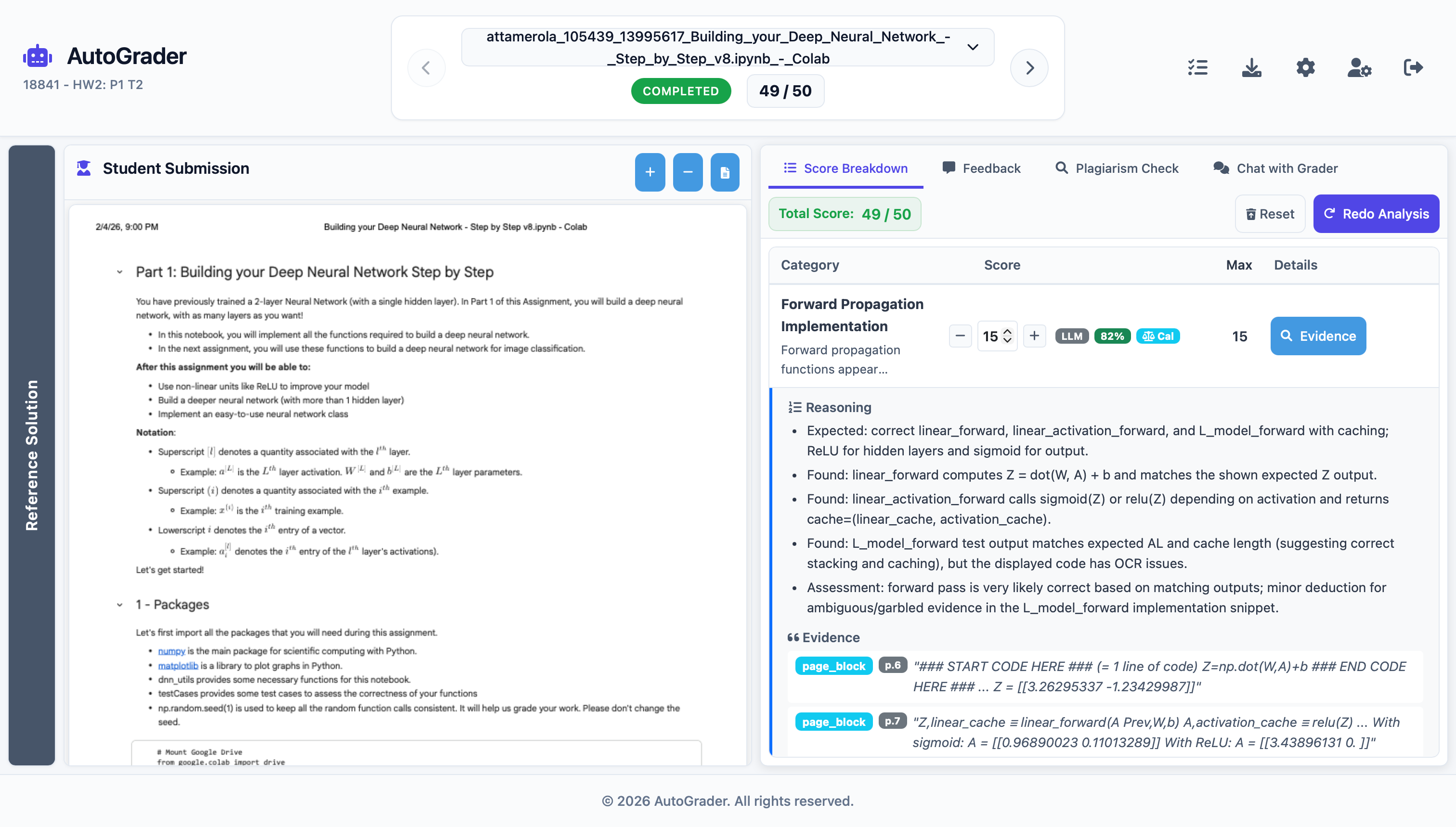

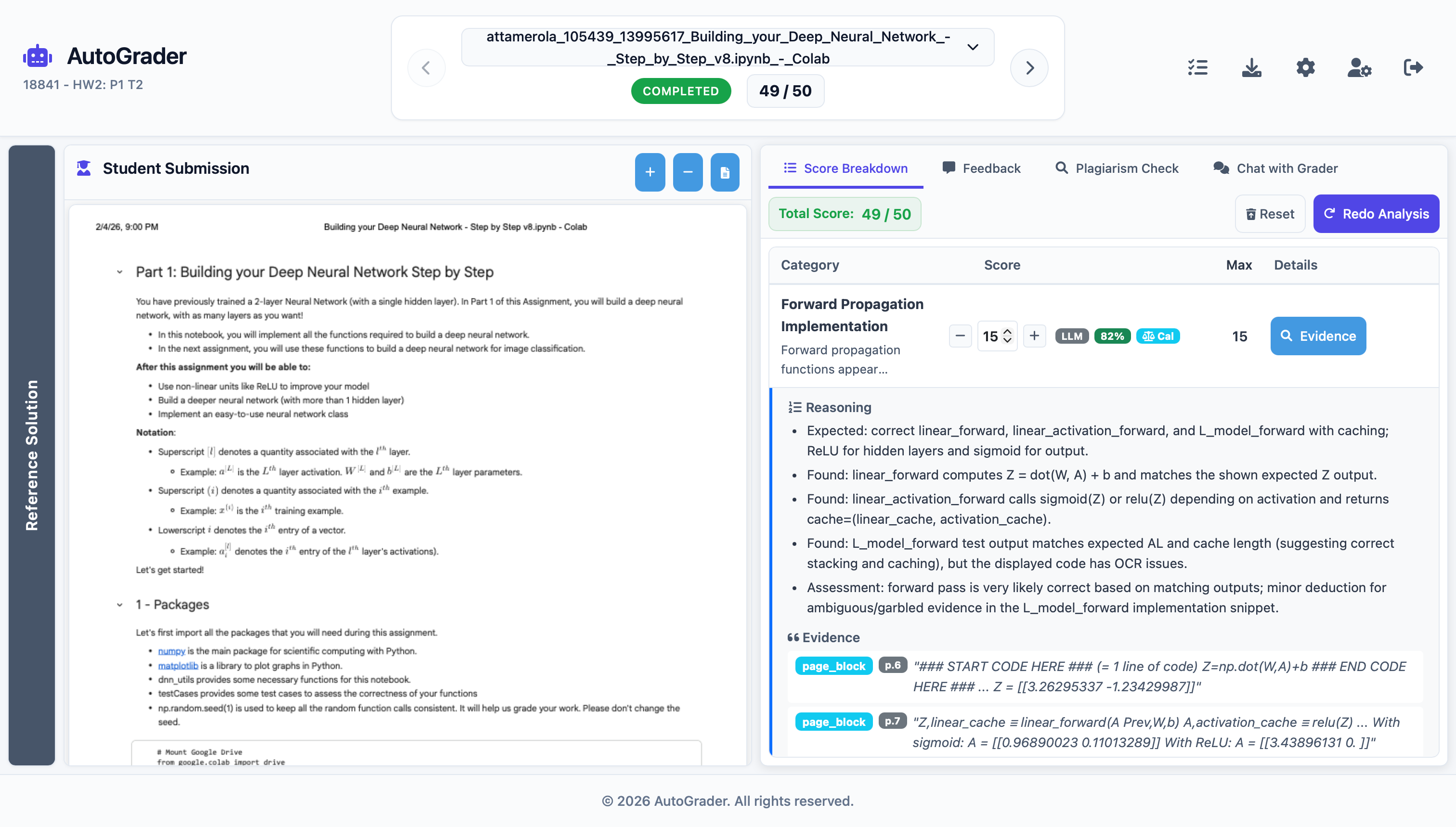

A three-panel workstation gives TAs simultaneous access to the reference solution, student submission, and an interactive AI grading console.

Accepts PDFs, Python files, Jupyter notebooks, Canvas quiz exports, HTML, and Markdown — reflecting the reality of today's mixed-format coursework.

Upload a reference solution and answer a few questions — AutoGrader generates a complete, calibrated rubric through a clarifying dialogue workflow.

Process entire class sections with configurable concurrency. Export grade reports as Excel, CSV, PDF with histograms, or JSON for your LMS.

A rigorous multi-stage architecture ensures that every decision is traceable, every score is defensible, and every override makes the system smarter.

Dual OCR (Mistral AI + PyMuPDF) produces rich markdown with block-level source maps — page, block, and line references for every passage.

An LLM grades each rubric category with cited evidence (max 250 chars per quote), explicit reasoning, and calibrated confidence scores.

Stored grading rules from prior TA overrides are retrieved and applied — only above the confidence threshold — to align output with instructor expectations.

TAs review, edit, and approve. Any override triggers the Memory Updater, which extracts a generalizable rule for future use.

Every TA override becomes a reusable rule. The system accumulates institutional knowledge, progressively aligning with your pedagogical intent — no extra work required.

The grading workstation puts reference solutions, student work, and AI analysis side-by-side — so reviewers spend time judging, not searching.

Reference solution, student submission, and grading console shown simultaneously with lazy page rendering for performance.

Ask the grader follow-up questions, request score adjustments in natural language, and explore evidence trails interactively.

Live pipeline status updates keep TAs informed as each stage completes, with adjacent submission prefetching for zero-lag navigation.

Integrated analysis surfaces potential similarity flags alongside grade breakdowns, keeping the review holistic.

Hear from instructors and teaching assistants who've used AutoGrader in real courses.

"AutoGrader has completely changed how I think about grading at scale. The evidence-anchored scores mean students never question why they lost a point — it's right there in their own words. We went from three days of TA grading to a single afternoon review session."

"I was skeptical that an AI could capture the nuance our rubrics require, but after the first homework the policy learning had already picked up on our notation conventions. By week four I was spending more time on teaching than grading — which is exactly how it should be."

See AutoGrader in action with your own rubric and sample submissions. Our team will walk you through a live demo tailored to your course format.